ICRA 2026

Learning Controlled Separation of Small Objects Between Two Fingers with a Tactile Skin

This site complements our paper Learning Controlled Separation of Small Objects Between Two Fingers with a Tactile Skin by Ulf Kasolowsky and Berthold Bäuml accepted for the 2026 IEEE International Conference on Robotics and Automation.

Abstract

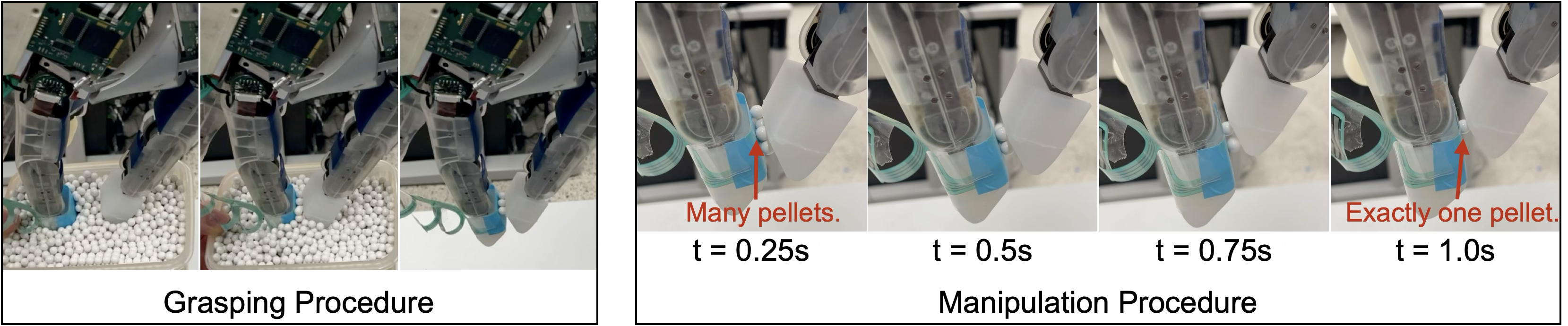

We introduce and solve the novel task of controlled separation of small objects with two fingers of a multi-purpose robotic hand: after grasping into a box of small objects, the task is to drop as many of them until a desired number larger or equal to 1 remains between the fingers. Here, “small” means relative to the width of the fingers (or grippers) but also in absolute terms, in our case handling little pellets with a diameter of only 6mm. We show that the task can be performed purely tactile using a spatially-resolved tactile skin on a fingertip. The separation policy is trained in simulation using reinforcement learning with a simple natural reward. In simulation experiments, we provide an exhaustive analysis of the benefits of using spatially-resolved tactile feedback: while an ideal (high-resolution) tactile sensor allows solving the task almost perfectly, a sensor with lower spatial resolution (here 4x4 taxels) still leads to an improvement of up to 20% compared to using only the fingers’ joint sensors. For this analysis, we further train an estimator alongside the policy that predicts the ground truth contact positions. Finally, we demonstrate the successful sim-to-real transfer for the DLR-Hand II equipped with a tactile skin.

Cite this paper as:

@inproceedings{kasolowsky2026,

author = {Ulf Kasolowsky and Berthold B{\"a}uml},

title = {Learning Controlled Separation of Small Objects Between Two Fingers with a Tactile Skin},

year = {2026}

}

Domain Randomization

We distinguish two types of domain randomization: Inter-episode and intra-episode randomizations. The first type refers to parameters sampled at the beginning of an episode and then kept constant. The latter is sampled periodically throughout an episode. While \(N(\mu, \sigma)\) denotes a normal distribution with mean \(\mu\) and standard deviation \(\sigma\), \(U(a, b)\) and \(LogU(a, b)\) denote a uniform and log-uniform distribution in the range from \(a\) to \(b\), respectively.

Finger Parameters

| Parameter | Unit | Type | Distribution | ||

|---|---|---|---|---|---|

| Joint offsets | $$q_\text{off}$$ | $$\text{rad}$$ | inter | $$U(-0.04, 0.04)$$ | |

| Joint noise | $$q_\text{noise}$$ | $$\text{rad}$$ | intra | $$N(0.0, 0.02)$$ | |

| Proportional gain | $$K_p$$ | $$\text{N}\,\text{m}\,\text{rad}^{-1}$$ | inter | $$U(4.8, 5.2)$$ | |

| Damping gain | $$K_d$$ | $$\text{N}\,\text{m}\,\text{s}\,\text{rad}^{-1}$$ | inter | $$U(0.26, 0.33)$$ | |

| Parasitic stiffness | $$K_e$$ | $$\text{N}\,\text{m}\,\text{rad}^{-1}$$ | inter | $$U(15, 35)$$ | |

| Stick friction | $$\mu_{\text{stick},1/2}$$ | $$\text{N}\,\text{m}$$ | inter | $$U(0.01, 0.03)$$ | |

| $$\mu_{\text{stick},3}$$ | $$\text{N}\,\text{m}$$ | inter | $$U(0.03, 0.05)$$ | ||

| Lateral friction | $$\mu_\text{lat}$$ | $$1$$ | inter | $$U(0.8, 1.5)$$ | |

| Spinning friction | $$\mu_\text{spin}$$ | $$1$$ | inter | $$U(0.0, 0.2)$$ | |

| Rolling friction | $$\mu_\text{roll}$$ | $$1$$ | inter | $$U(0.0, 0.1)$$ | |

Object Parameters

| Parameter | Unit | Type | Distribution | ||

|---|---|---|---|---|---|

| Lateral friction | $$\mu_\text{lat}$$ | $$1$$ | inter | $$U(0.8, 1.5)$$ | |

| Spinning friction | $$\mu_\text{spin}$$ | $$1$$ | inter | $$U(0.0, 0.2)$$ | |

| Rolling friction | $$\mu_\text{roll}$$ | $$1$$ | inter | $$U(0.0, 0.1)$$ | |

Skin Parameters

| Parameter | Unit | Type | Distribution | |

|---|---|---|---|---|

| Sensor position | $$\Delta x$$ | $$\text{mm}$$ | inter | $$U(0.25, -0.25)$$ |

| $$\Delta y$$ | $$\text{mm}$$ | inter | $$U(-0.25, 0.25)$$ | |

| Sensor orientation | $$\gamma$$ | $$^\circ$$ | inter | $$U(-2.3, 2.3)$$ |

| Elasticity | $$E$$ | $$\text{MPa}\,\text{m}^{-1}$$ | inter | $$U(100, 500)$$ |

| Scale | $$S$$ | $$\text{N}^{-1}$$ | inter | $$U(70, 100)$$ |

| Scale Offset | $$\Delta S_j$$ | $$\text{N}^{-1}$$ | inter | $$U(-7.5, 7.5)$$ |

| Taxel offsets | $$T_\text{off}$$ | $$1$$ | inter | $$U(-2.5, 2.5)$$ |

| Noise range | $$\sigma_\text{taxel}$$ | $$1$$ | inter | $$U(0, 2.5)$$ |

| Taxel noise | $$T_\text{noise}$$ | $$1$$ | intra | $$N(0, \sigma_\text{noise})$$ |

Learning Configuration

To train the policies, we relied on the Proximal-Policy-Optimization algorithm (PPO).

| Parameter | Value |

|---|---|

| Number of environments | $$160$$ |

| Total Timesteps | $$8000000$$ |

| Num Steps per Rollout | $$60000$$ |

| Batch Size | $$4000$$ |

| Learning Rate | $$0.0003$$ |

| Num Epochs | $$5$$ |

| Discount Factor | $$0.99$$ |

| General Advantage Estimator Lambda | $$0.95$$ |

| Maximum Gradient Norm | $$0.5$$ |

| Value Function Coefficient | $$0.5$$ |